A working cluster and a team that still needed me to operate it

Making the cluster less dependent on me.

Once Archibus was running in Kubernetes, the next question was whether someone other than me could actually operate it.

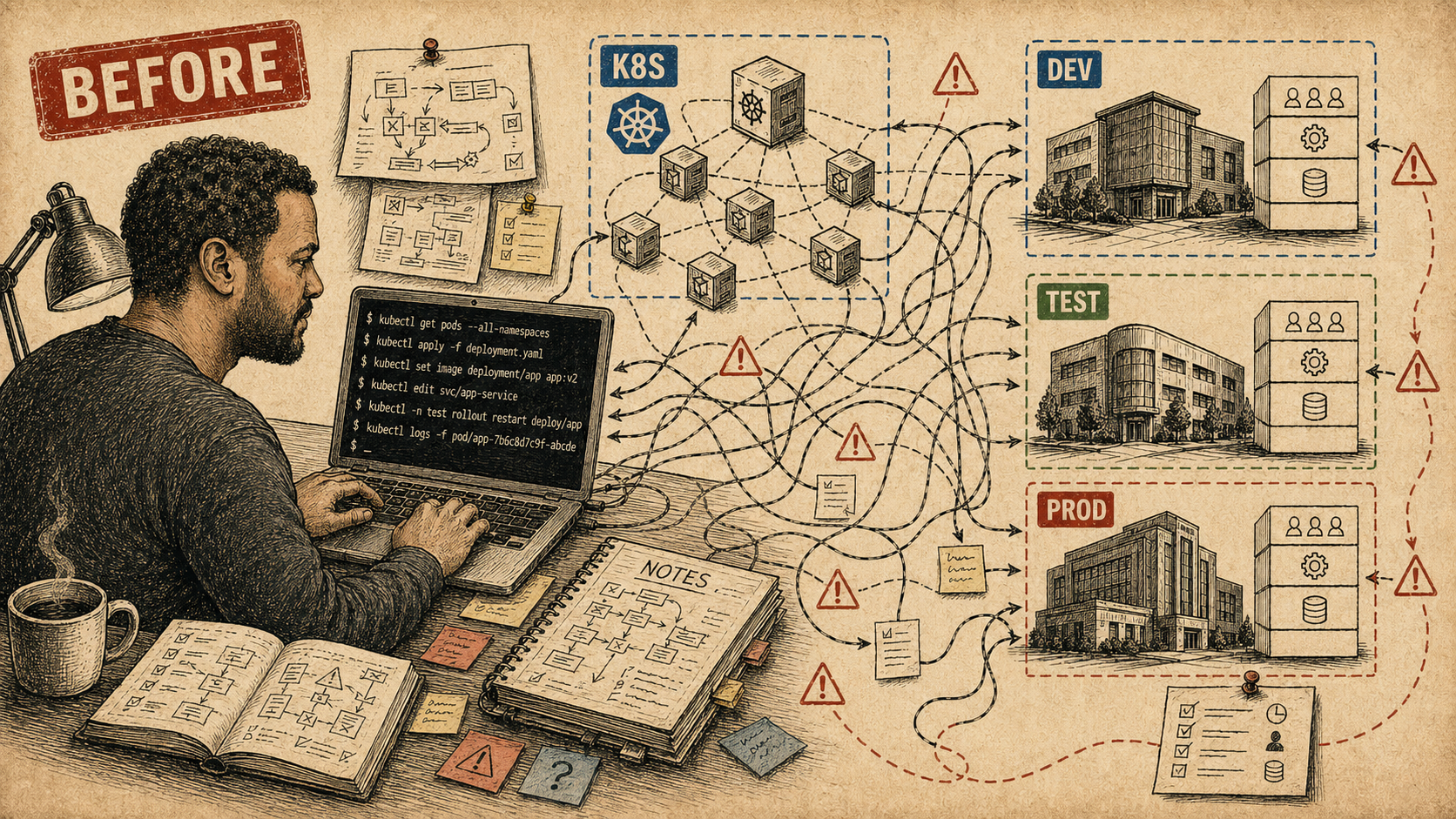

The research cluster answered the first question: Archibus could run in Kubernetes with a self-hosted identity layer and real SAML SSO. The problem that came after that was slower to surface. I was the only one who could actually operate it.

That is not a team platform. It is a private operating model with Kubernetes

underneath. If a shared dev environment drifted or broke, someone had to find me.

If a deployment needed to happen, I ran it. If a team member wanted to understand

what was currently running and why, the most accurate source was my shell history.

kubectl is not a team interface when only one person has the context

to use it.

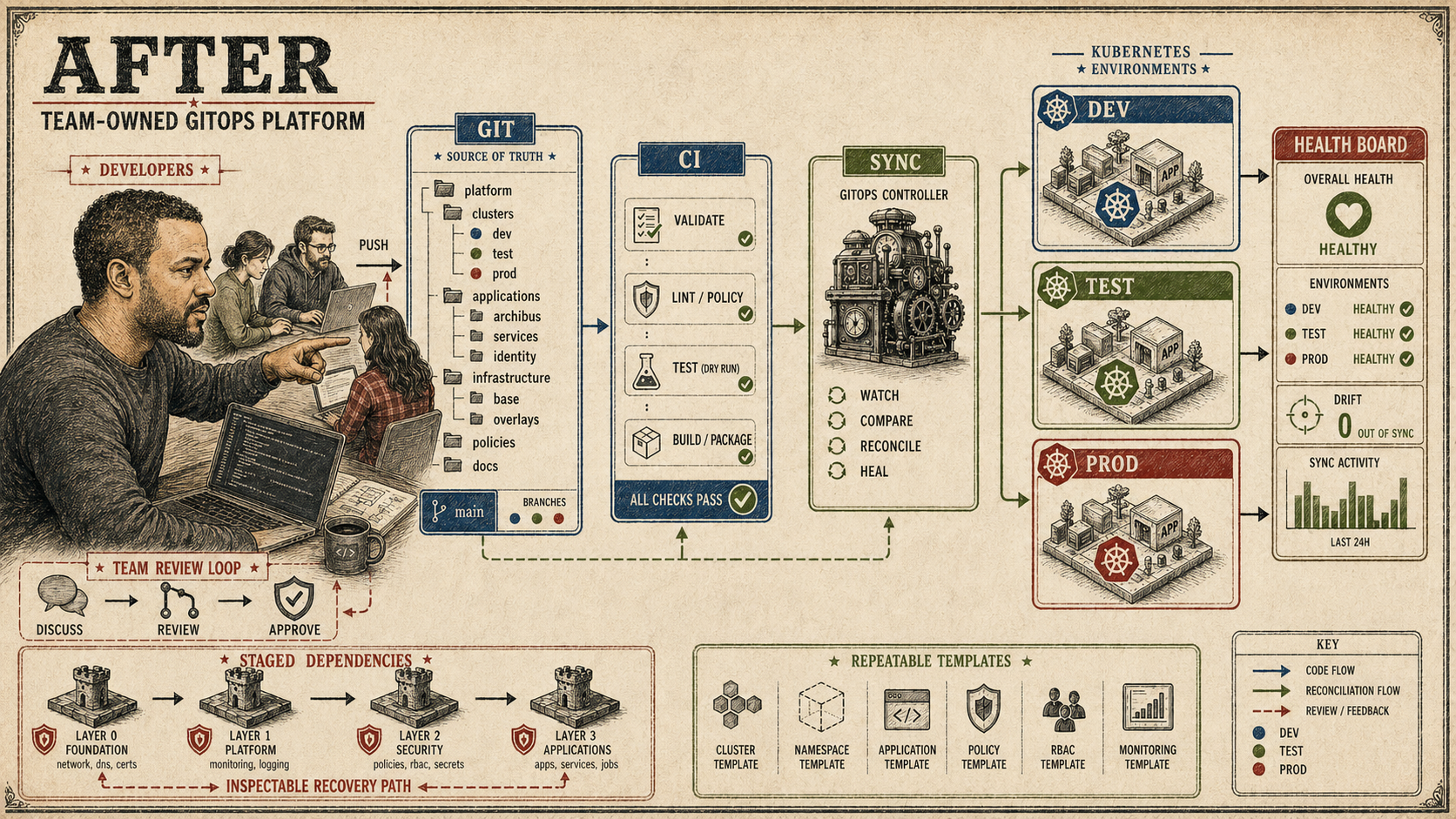

The goal was to fix that. Not by writing better runbooks, though runbooks helped. By making cluster state visible enough that another person could read it, and by making changes go through a process that did not require me at the terminal.

Making Kubernetes less private

The thing ArgoCD actually solved was visibility. Not deployment mechanics, though those mattered too. Visibility: what is deployed, what changed, is it healthy, does it match the source repo, what branch is it tracking? Those are questions someone can answer from an app UI. They cannot answer them from a shell session they were not present for.

The first practical step was making branch names mean something operationally.

dev deployed automatically. staging deployed automatically.

main required a manual gate before it touched production. That sounds

obvious, but having it codified instead of remembered is the difference between a

workflow and a convention that exists only as long as everyone recalls it.

environments:

- name: dev

branch: dev

autoSync: true

- name: staging

branch: staging

autoSync: true

- name: prod

branch: main

autoSync: falseOnce the branch-to-environment mapping existed, the next question was repeatability. ApplicationSets let one template generate multiple environment-specific Applications from a list. The point was not to save time writing YAML. The point was that a new persistent Archibus dev or test environment followed the same shape as the ones before it, instead of a one-off shell session that someone had to reconstruct from memory.

apiVersion: argoproj.io/v1alpha1

kind: ApplicationSet

spec:

generators:

- list:

elements:

- env: dev

branch: dev

namespace: archibus-dev

- env: test

branch: test

namespace: archibus-test

template:

spec:

source:

targetRevision: "{{branch}}"

path: overlays/archibus/{{env}}

destination:

namespace: "{{namespace}}"

The CI pipeline change that made the handoff concrete: instead of running

kubectl apply directly from CI, the pipeline pushed to the source

branch and asked the GitOps controller to reconcile. Direct apply made the CI

runner the deployment interface. The sync request made the controller the

deployment interface, with visible status, history, and a drift signal if

something changed outside the normal path.

kubectl apply made the person at the terminal the deployment

interface. This made the controller the deployment interface. One of those

produces an audit trail and a visible current state. The other produces a shell

history that lives in one person's session.

git push origin dev

# CI validates the change, then asks the GitOps controller to reconcile.

argocd app sync archibus-dev

argocd app get archibus-devThe early ArgoCD work also included explicit RBAC, team-oriented branch workflow docs, and a structured model for which environments auto-deployed versus which required approval. The goal behind all of it was the same: make the cluster answerable by source and status instead of by whoever had been most recently working in it.

What it became

That model held through the dev cluster work and then evolved further in later environments. The dev cluster still runs ArgoCD applications from that period, most of them healthy and synced. It is a dev cluster; not everything needs to be pristine, and that is fine.

In customer-test and ISM prod, Flux replaced Argo CD as the GitOps owner in early 2026. The operating question stayed the same but the answer got more explicit. Platform resources first. Secret providers and clones after. Chart-backed app releases next. Post-release extras last. The staged dependency graph lives in source, not in a runbook that depends on someone having been present for the original decisions.

dependsOn fields are not boilerplate. They are an operational

assertion: these things must be ready before those things run. When that order

lives in a manifest instead of in the operator's head, recovery does not require

interrogating the original architect.

apiVersion: kustomize.toolkit.fluxcd.io/v1

kind: Kustomization

metadata:

name: environment-helmreleases

spec:

dependsOn:

- name: environment-platform

- name: environment-secrets

wait: true

path: ./flux/environment/helmreleasesThe explicit dependency order in that manifest took about a year of environment work to earn. The Bash scripts from the research cluster made the manual steps legible. The ArgoCD experiments made the gap between "what is deployed" and "what the repo says" feel like a problem worth solving rather than a normal operating condition. The Flux staging took that further and made the full bootstrap order inspectable without a conversation.

The goal was not to remove me from the system. It was to stop being the only API.

The question underneath all of it, from the first ArgoCD experiment to the current Flux setup, has not changed: can someone understand and repair this environment from source and status, or do they have to ask me? Every piece of the current platform that has a good answer to that question got there because an earlier version had a bad one.